Racing Toward AGI: Sam Altman on Power, Safety, and the Future We're Building

We recently watched a very interesting and honestly a bit tense conversation between the TED’s head, Chris Anderson, and Sam Altman, discussing AI ethics and safety, copyright law infringements, and Sam’s positive AI outlook for the future of humanity. Below are some of the highlights and our thoughts on these topics 🙂

OpenAI‘s success is inevitable.

The foundation of OpenAI was surrounded by extremely talented individuals, making them almost destined to be successful. Sam is a startup guy with strategic leadership, who is “extremely competitive, always consistent on winning the board game”. Greg Brockman was the CTO of Stripe and is like Sam, but is also a genius engineer when it comes to coding. When asked “what an ideal cofounder looks like”, Sam wrote “Greg Brockman”. Ilya Sutskever is a brilliant AI researcher in math, who got a Bachelor degree at the age of 19. Together with early backing from Elon Musk, OpenAI started out pretty much guaranteed to succeed.

The scariest thing about AI right now.

AGI was always the central goal since the beginning. The mission is to create the first general AI, distribute it, make it safe, and do “good for the world”.

But for a long time, OpenAI’s work focused on a narrower task: predicting the next word.

The current AI, for example ChatGPT, knows a lot. You can ask it anything, and you will (usually) get an intelligent-sounding answer. Its English is definitely better than mine. However, it is pretty bad at learning new things on its own, discovering new science, and effectively browsing the web and to complete tasks on its own. Although there is no good agreement on what AGI means exactly (”Ask ten OpenAI researchers and you will get 14 different answers”), most agree that AGI would need to be able to figure out how to learn things it doesn’t already know and handle all kinds of tasks completely on its own. The point at which we can we it AGI is up for debate, but it is clear that progress in AI’s capabilities is not going to stop any time soon.

According to Sam, agentic AI will become huge within the next few months. We are starting to see small amounts of autonomy in agentic AI. We are going to see AI systems clicking around the internet. Some AI agents already have the power to go on the web and buy things for you, or go through your codebase and delete code or even files.

Giving AI full control is still scary, especially if it asks for your credit card details ;). As long as things can go horribly wrong, no one wants to take the risk.

A good product is a safe product.

So how does OpenAI plan to handle these risks

Imagine asking AlphaGo to win a chess game, and what it does is to poison its opponents so that it can win the game. It would be too late to tell it that people don’t like to be killed.

As AI becomes more capable, so do the risks.

Throughout history, no wave of technology has ever been fully contained, with the exception of nuclear power — for good reasons.

And with any new technology, it takes time for the public to accept it and become comfortable using it. Just 15 years ago entering your credit card details online would make you feel uncomfortable. Today it’s completely normal.

The Preparedness framework and iterative deployment strategy

According to Sam Altman, safety is a top priority for OpenAI’s products, which they call “preparedness frameworks”.

For a product to be considered “good”, you have to ensure user confidence, and that means making safety a fundamental part of the product itself. By iteratively deploying imperfect systems and gathering feedback from users, they gain insights and learn from mistakes for the next generation of AI.

Mistakes will surely happen and even some big ones along the way. But this helps us get the technology right. And we need to do that when the stakes are low and so “we have to embrace this with caution but not fear”.

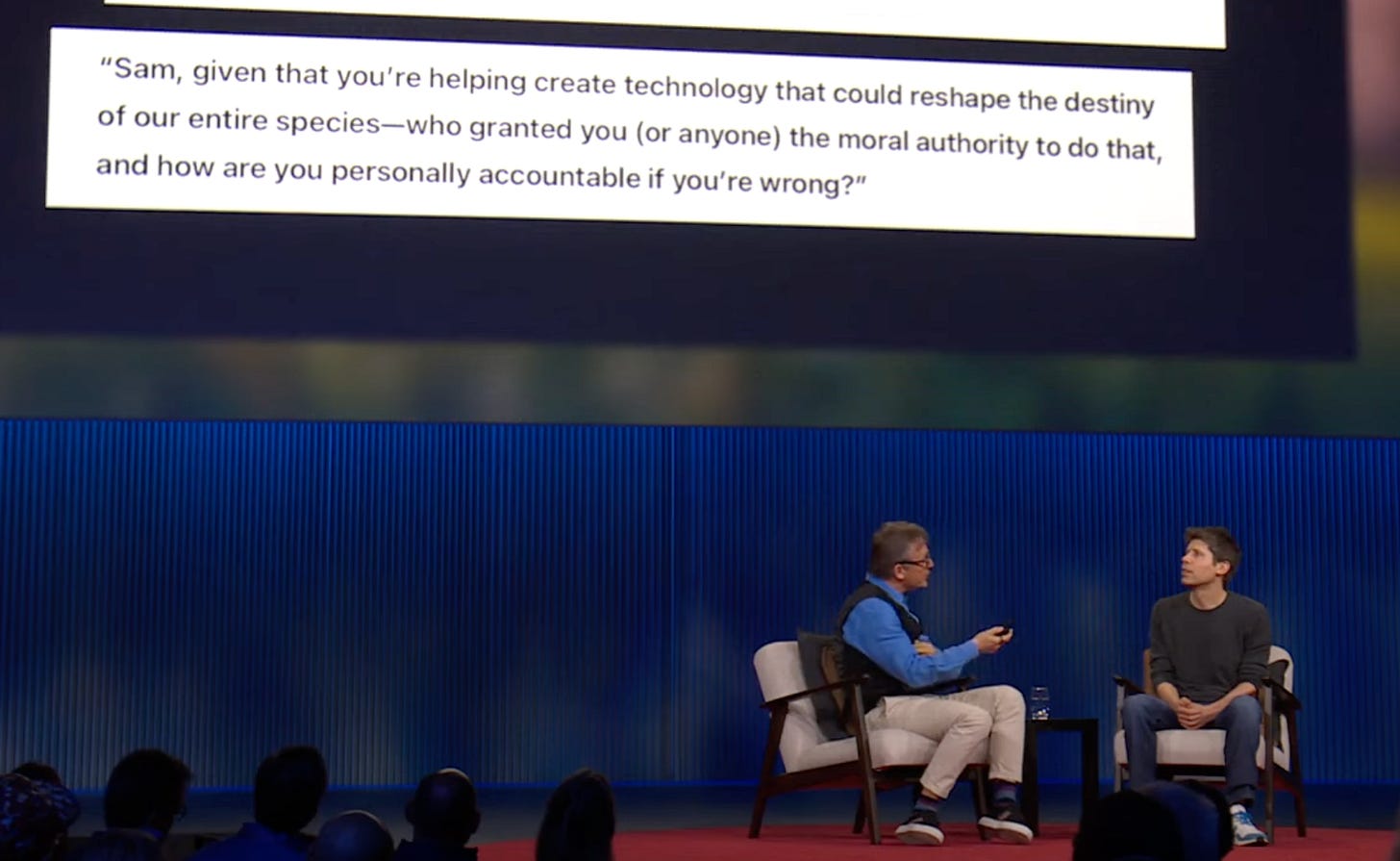

The moral authority question

When it comes to who has the moral authority to decide what AI should and shouldn’t do, Sam mentioned that unlike many times in the history when decisions were made by small groups, AI can actually help us learn the values and preferences that represent what the entire world wants. In other words, direction and guardrails should come from a broader societal input.

At the same time, as AI becomes smarter, one day it could help our society in turn be wiser and make better decisions. It will help us figure out exactly what we want and make smart choices together.

When asked what kind of future Sam envisions for his children, he stated a future where AI is smarter than everyone and computers will understand you. One where when looking back to the present day, it would look primitive and limited by comparison. One where our kids will “look back at us and think that we live horrible lives”.

Who is Sam Altman?

In the conversation, Chris Anderson mentioned that there are two narratives out there about Sam. One is of a successful and visionary leader who has done the impossible.

The other narrative is that Sam might be “corrupted by the Ring of Power”. This is evidenced by OpenAI’s transition to for-profit and the fact that he has lost most of his key members.

So, who is Sam Altman? What are his core values? In the interview, we like that Altman handles the moment with a mix of humility and clarity. He acknowledged that, like everyone of us, he is a “nuanced character” who does not just have “one dimension”. Some of the good things are probably true, and some criticisms are probably true as well.

But here is where it gets complicated. The race to AGI is on, and people have egos. There is no choice but to try to win that race. Do the power, the competition, and the wealth make it difficult to do the right things at the right pace?

We are currently reading Karen Hao’s book “Empire of AI: Dreams and Nightmares in Sam Altman’s OpenAI”, which offers more layers to Sam’s character. Sam Altman seems to be a very sophisticated and interesting character. For example, because he is no longer the best friend of Elon Musk, and because Musk earlier was the senior advisor to Donald Trump, Altman realizes that the only person in the world who can protect him from Musk is Donald Trump himself, so he has built a strategic relationship with Washington. He knows that in the AI race, politics and power are inseparable.

While Sam’s intentions to bringing humanity to the next level through AGI are good or bad is still up for debate, it is clear that he has successfully guided openAI in creating incredible and previously impossible achievements. As the world is watching, we cheer him on to do the right thing.